Former NFL Player, Microsoft Team Up to Make Technology More Accessible

Microsoft campus (Courtesy of Microsoft)

Microsoft opened its 2014 Super Bowl ad with the question: “What is technology?”

As Steve Gleason, an ex-New Orleans Saints safety later diagnosed with amyotrophic lateral sclerosis (ALS), spoke about the power of technology, videos rolled of doctors manipulating 3D X-rays, a blind man painting, and a soldier witnessing the birth of his child over a webcam.

For Gleason, who was three years into his diagnosis, that ad was the start of his relationship with Microsoft. A year later at the company’s hackathon, a team of engineers was tasked with making the Surface mobile computer that Gleason was using more accessible for him and others with ALS.

While skeptical at first, Gleason found the Microsoft team came through. By the time he arrived at the company’s 500-acre headquarters in Redmond, Washington, engineers were working on the tablet, and testing solutions to the problems Gleason had discovered while using the device.

“What has been most impressive is the focus on the needs of the user and not the benefits of the company,” Gleason wrote in an email response to ALS News Today.

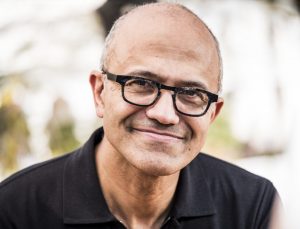

The inclusive design process behind repurposing the Surface for ALS patients is an example of CEO Satya Nadella’s vision for greater accessibility.

A culture of empathy

Empathy is Nadella’s favorite word. It informs Microsoft’s mission statement, company culture, and the products it creates. For him, design thinking is empathy.

Nadella, in part, learned empathy from his son, Zain, who had a lack of oxygen during birth that led to cerebral palsy, and his daughter, who has a learning disability. In an interview with Bloomberg’s David Rubenstein, Nadella said it took him four to five years to fully accept Zain’s situation and “see life through his eyes and then do my duty as a father.”

While empathy was key for Nadella as a father, it also has been an important part of how he runs Microsoft. “I think empathy is at the core of innovation,” he said in the Bloomberg interview.

“It seems that aspect of leadership instilled itself in the culture of Microsoft,” Gleason said.

Nadella furthered the companywide emphasis on accessibility by appointing Jenny Lay-Flurrie as chief accessibility officer in January 2016. Since starting at Microsoft 15 years ago on the customer support Hotmail team in London, Lay-Flurrie has noticed a palpable change in the outlook toward disability.

“Over the years I’ve watched that grow and manifest into a company culture,” Lay-Flurrie said.

She said her team brings “personal expertise as people with disabilities, our academic and nerdy interest in accessibility and embed that into the design process of our products.”

Before taking on the role of chief accessibility officer, Lay-Flurrie worked as senior director of accessibility, online safety and privacy, and had been chair of the disability employee resource group, where Nadella was the executive sponsor.

Lay-Flurrie describes herself as “profoundly” deaf, meaning she can’t hear conversations but can hear enough of her own voice to maintain her British accent.

The interview with ALS News Today was conducted over Microsoft Teams, and she was quick to list all the accessibility features that helped her — artificial intelligence (AI) captioning, blur screen (built by deaf engineers for lip-reading), her American Sign Language interpreter Belinda Bradley pinned to her screen, and the option of using text-to-speech.

Her hope is that what makes her and employees excited about using Microsoft technology will translate into what the consumer feels when downloading a program or unboxing a piece of hardware.

“We manage accessibility like a business,” Lay-Flurrie said. “That’s really my core job as the [chief accessibility officer] to make sure that customers can trust what they get.”

Social model of disability

Part of what they teach at the Inclusive Tech Lab, the gaming arm of Microsoft that focuses on accessibility, is what is known as the social model of disability.

Bryce Johnson, head of the lab that created the popular Xbox Adaptive Controller for people with disabilities, acknowledges not everyone uses that definition, but the model is evident in their product development.

The model’s core idea is that the fault for a person with a disability being unable to use a technology lies with the product designer, not the disabled person. In similar ways, an architect is at fault if a public building lacks a ramp, not the person in a wheelchair trying to enter.

“If someone couldn’t use that Xbox controller because of the way it was designed, we had to recognize we were causing the barrier,” Johnson said. “The Adaptive Controller was meant to be the controller for everyone else.”

During the design phase of the Adaptive Controller, engineers focused on its “primary use case.” Johnson had to remind them a controller already existed — the classic Xbox controller. What they were doing here was reinventing what a controller should be for those with a disability.

“It was all based on that core design principle of how can you empower people in a way that was affordable, scalable and adaptable,” Lay-Flurrie said.

Function was important to developing the controller, but so was the form. One of the five organizations in a company partnership reminded Johnson and his team about the controller’s appearance. Richard Ellenson, CEO of the Cerebral Palsy Foundation, was adamant that the controller look as close to a regular Xbox controller as possible.

Once it came on the market, Johnson heard stories of children who had resisted using other accessibility devices at school but embraced the Adaptive Controller. It was proof that the social model of disability and empathy — putting themselves in the shoes of another — worked.

“Nothing about us without us” — the unofficial Inclusive Tech Lab slogan — was on display here, Johnson said. It forced his team to think about ways they could reduce the stigmatizing effect of adaptive technology.

Using technology to help people with disabilities eventually will help everyone, Gleason said. In the near future, he predicts that people will use their eyes to control machines and, further down the line, our brains might directly give commands.

“I see technology for disabled people, similar to what I’m using today, I see this type of technology as the training ground that will eventually impact ordinary humans,” Gleason said.

Accessibility as a ‘mission’

Accessibility is evident across Microsoft.

At the company’s annual developer conference two years ago, Nadella announced a five-year $25 million AI for Accessibility project. The project awarded cash grants, Microsoft tools, the ability to store data on the cloud, and employee expertise.

It has awarded more than 40 grants to organizations and companies working on ways to use AI to help those with disabilities. The grant has yielded products that help people with autism interact with faces, teach children braille, and help individuals with disabilities get employed.

Apart from the grant program, Microsoft has created an app for iOS and Android called Seeing AI, which helps blind people navigate their world. Users take photos and a voice assistant reads back a description of them. The app has completed 20 million tasks since it was released in 2017, including identifying a soup can, reading a menu, and getting descriptions of people.

“Our mission at Microsoft is to empower every person and organization on the planet to achieve more,” Lay-Flurrie said. “That is meant to be taken literally. Every person.”